The Scenario

It’s the Friday before a major holiday and an urgent email hits your inbox. Your client, who has been a dream to work with over the past few months, has a problem.

Two seconds ago you were thinking about kicking your feet back and relaxing, but now your adrenaline is surging. The CEO (who has never been mentioned before now) is finally taking a look at the new site you’ve developed, and the menu is “completely broken.”

Did you actually think the corporate executives were going to look at the new site with a Mac? A dizzying array of questions zip through your mind. “How could this happen? I checked the site on all of the major browsers!” you say to yourself as the world around you spirals.

After some deep breathing exercises and a bit of inquiry, it becomes apparent that the browser in question is Microsoft Edge on Windows 10. To make matters worse, the site wasn’t actually tested on any browsers beyond the boundaries of your Mac devices. You’ve been loyal to Apple for as long as you can remember, but that won’t help now.

Although this story is anecdotal, it’s based on real events that happen to developers every day. Perhaps you will get lucky and only people with the most up-to-date browsers will see your work, but eventually, this approach will catch up with you. Let’s take a look at some ways to mitigate this problem, and perhaps help you sleep a little better at night by knowing that your websites look great for everyone.

But first, one more quick story.

I recently worked on a WebDevStudios (WDS) client project where a date-picker was specified in the design mock-up. I was excited to use an HTML5 input for this and thought it was working pretty well, with the only obvious downside being that I couldn’t add custom styles to every aspect of it. This solution seemed to work great along the way as I developed the site using Chrome on my (ahem) Mac.

But, funny things started happening as I tested other browsers. I found that the date-picker was not displaying correctly in Safari. This came as a surprise to me. As of July 2019, an HTML5 input that I assumed was pretty common is not actually supported by the newest version of Safari, and it was not behaving as expected in a few other browsers, as well.

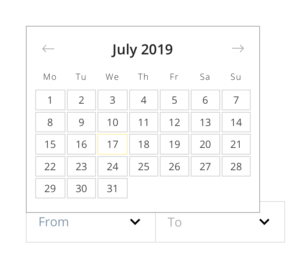

I expected to see a date picker similar to this:

But instead, this is what I saw in Safari—just a cursor:

If I hadn’t tested with Safari and the other platforms, I wouldn’t have caught this problem. Safari has a 13.5% market share of browsers, so there’s a pretty significant chance someone would have been affected. Ultimately, I used a jQuery library to help supplement the date-picker, and it solved my problem, but without cross-browser testing, this lurking problem may have affected the functionality of the page.

Enter Cross Browser Testing

Situations like the one above can be significantly curtailed with the proper approach. There are two common methods for cross-browser compatibility testing.

The first is to purchase all of the computers and devices required to test applicable browsers. Note that you can use a virtual machine to fill in the gaps if you don’t have all of the operating systems available. One of the major advantages of this approach is speed. If you need to test something, just open up the device and test. But that also means having to obtain and store many tablets, phones, and computers in your work space. It also means keeping them charged and maintained. You’ll probably need to document your tests too, and managing screenshots and videos can be time-consuming.

The second is cloud-based testing. These are testing services, such as CrossBrowserTesting by SmartBear, and BrowserStack, which allow you to enter your site’s URL; then tests are run on actual browsers hosted by the service provider.

There are many pros to this approach. For instance, you don’t need to stockpile computers and devices. You can also automate tests on many browsers at once, saving tons of valuable time. Results of these tests can be shared as links.

Some of the cons include the fact that you’re testing across the internet, so there may be considerable delays compared to having an actual device. Cost is also another factor, but buying all of those necessary devices could quickly eclipse the yearly price of a cloud-based testing service.

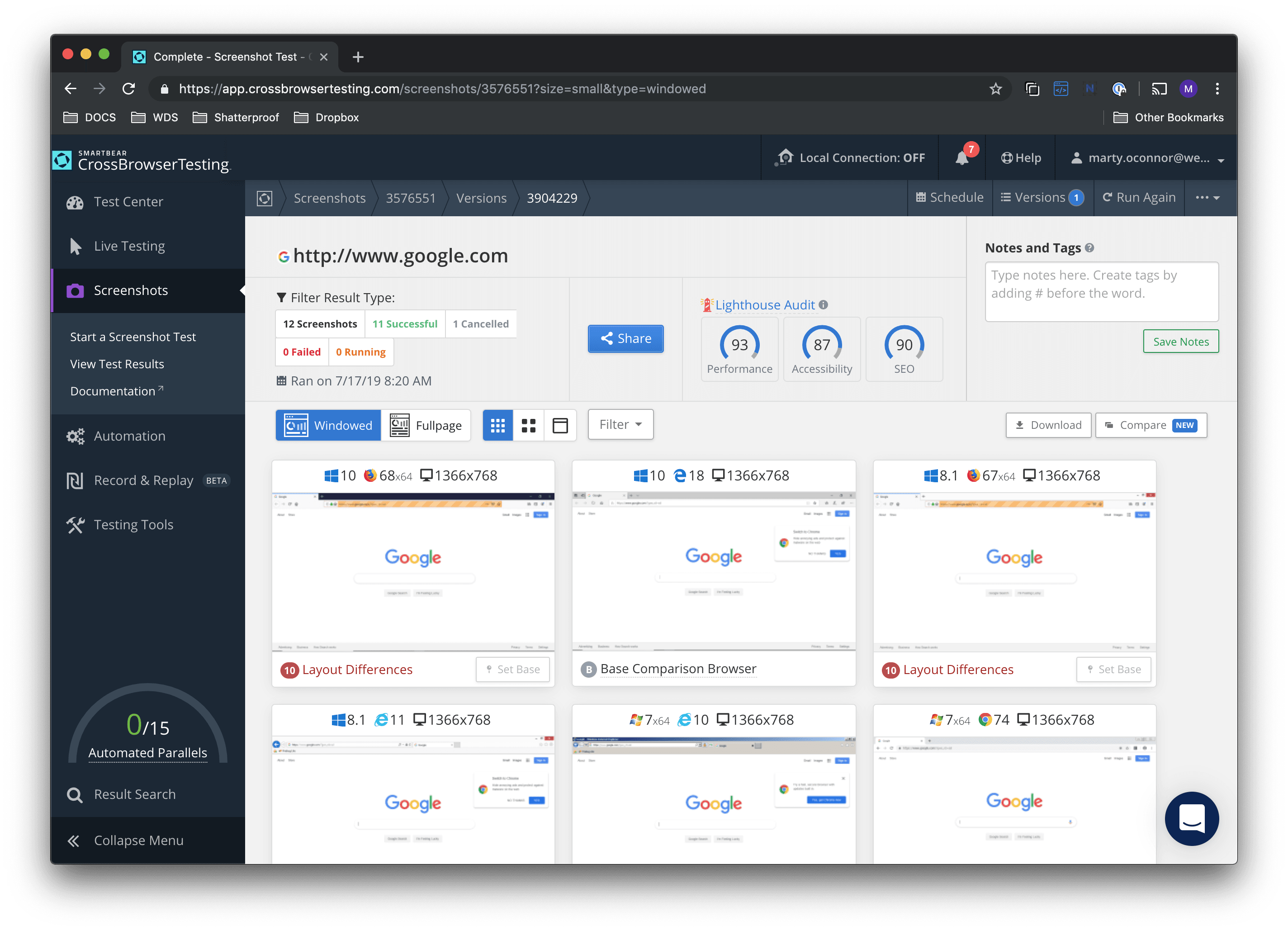

Automated testing saves massive amounts of time. Here, I’ve run a quick test of Google. A full-page rendering of the site is now available, as well as a description of layout differences between each type of browser. They’ve even run the site through Google’s own Lighthouse for performance, accessibility and SEO ratings. This set of tests can now be shared through a link.

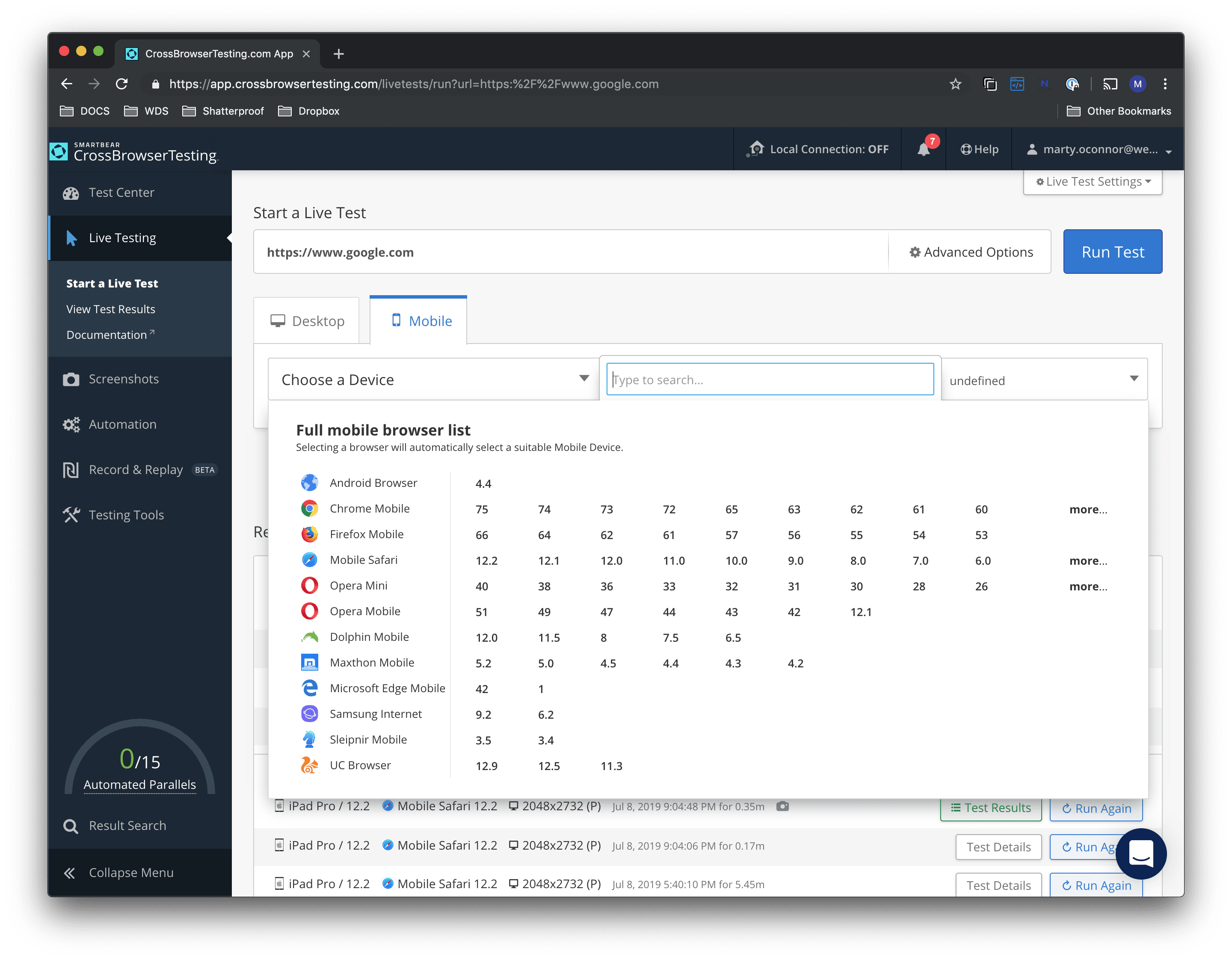

Live testing allows you to run an actual browser and test out aspects of your page in real time. As you can see, the browser choices are practically endless:

If you’re new to all of this, perhaps start with an online service, and add devices as you feel you need them. Most developers benefit from a hybrid of the two approaches.

At WDS, we emphasize delivering consistent, beautiful experiences for all of our client’s target browsers. To help maintain this quality standard, we use a combination of both cloud-based cross-browser testing and actual devices.

In general, a good philosophy for testing is to test early and test often. If you can catch bugs and issues earlier in your process, then you’ll feel more relaxed and confident about the product you’re delivering. You’ll know that everyone’s experience will be a great one, and you’ll also have a course of action if and when issues do arise. It’s a big world with many browsers, so get out there and make sure they’re all able to display your site in its best light.

I use MobileTest to test responsiveness on mobile and tablet, no need to signup.

I just tried it and tested a few websites there. A great tool to check the website on multiple devices and browsers. I think its worth to buy it.

Can you please share the link of the tool you are using?

Thank you.

I have tested this tool for multiple browser compatibility and I must say this is a great tool.